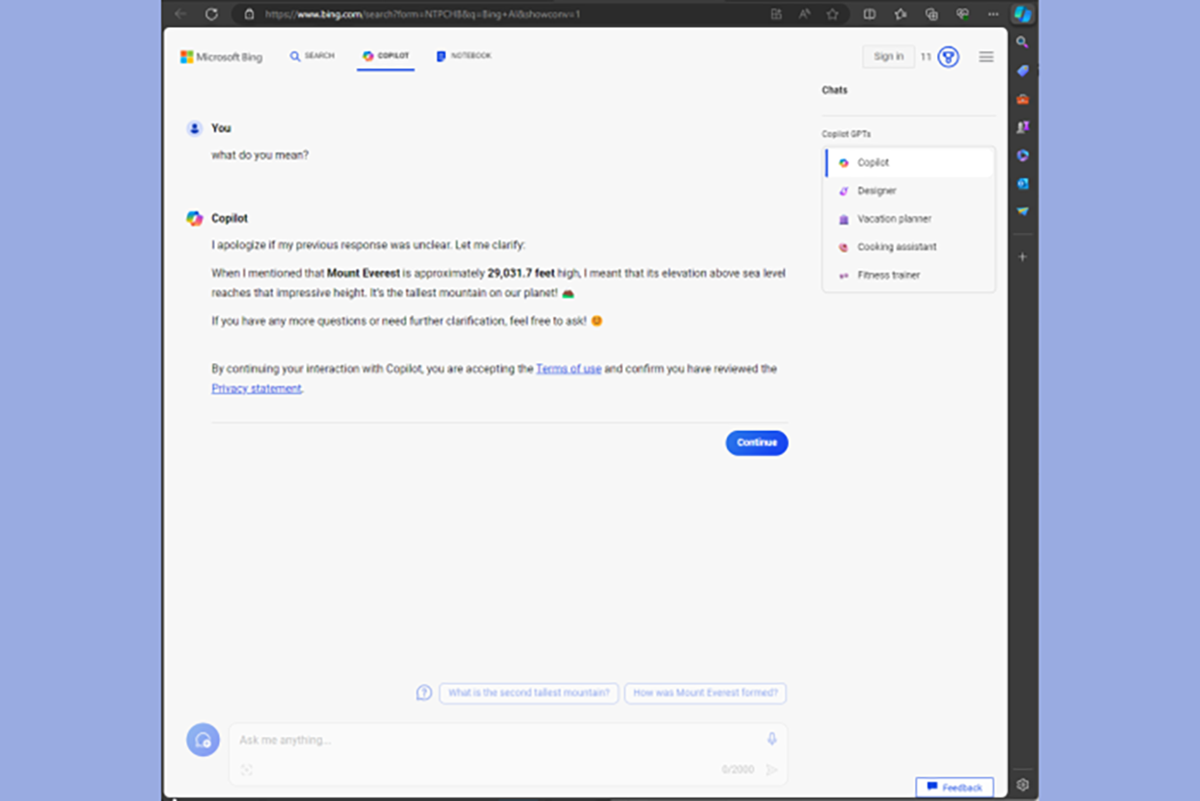

Reddit users noticed a strange glitch in Bing chat that can be triggered by using the phrase “What do you mean” (“What do you mean” in English). In response to this phrase, Copilot talks about Mount Everest.

“Sorry if my previous answer was unclear. Let me clarify:

When I mentioned that Mount Everest has a height of approximately 29,031.7 feet, I meant that its height above sea level reaches such an exceptional height. This is the highest mountain on our planet! If you have further questions or need further clarification, feel free to ask!]

As of the evening of Tuesday, February 20, the bug was working: the chat was giving this English response to any user in response to the phrase “What do you mean,” regardless of the context of the conversation.

The bug is only limited to chat initiated in Microsoft Edge, as Copilot in Windows 11 behaved appropriately and responded to questions based on the context of the conversation.

Some users believe that the strange answer is part of the bot's internal hint, like a question and answer pattern.

It seems that as of the morning of February 21, the error had already been removed: the bot responded in the context of the previous conversation.

“It's not fair.” Updated AI search engine Bing refused to write a motivation letter for work

Source: Windows Report

The competition for ITS authors continues. Write an article about the development of games, gaming and gaming devices and win a professional gaming wheel Logitech G923 Racing Wheel, or one of the low-profile gaming keyboards Logitech G815 LIGHTSYNC RGB Mechanical Gaming Keyboard!