In any case, the neural network has learned to fire.

Microsoft Research Associate Adrian de Winter published a scientific paper on GPT-4 and Doom. Adrian decided to find out if a large language model could play Doom; It turned out it was possible.

And here's another story

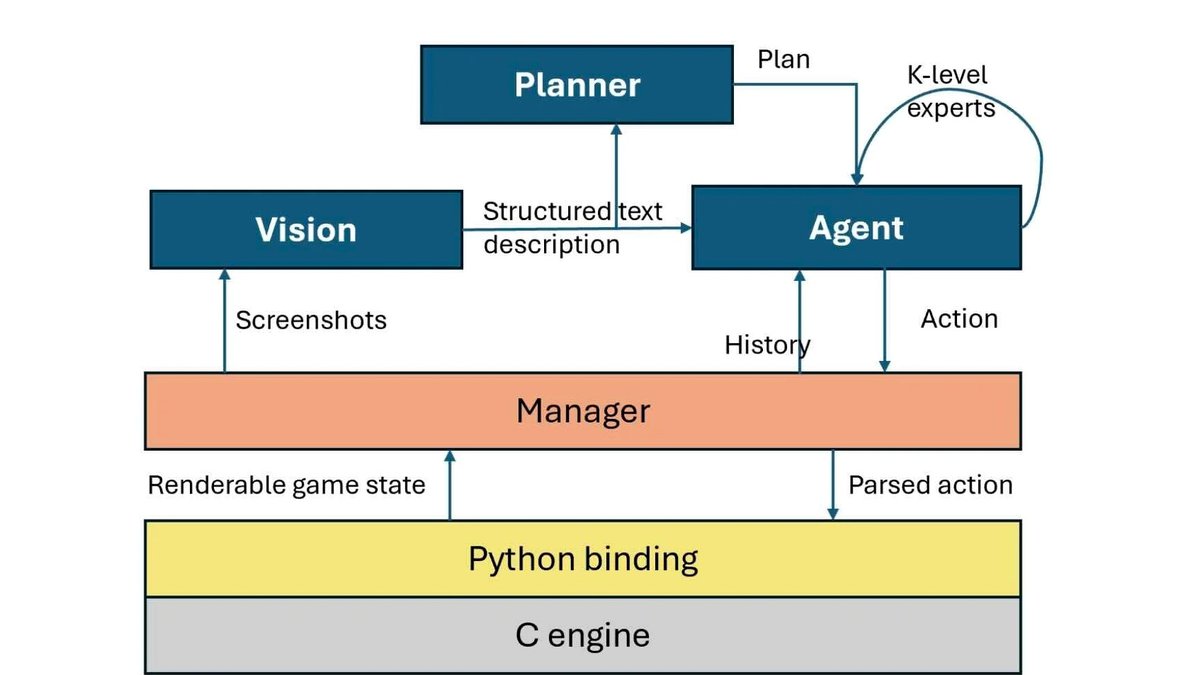

It is noteworthy that GPT-4 did not receive additional training in Doom mechanics. At the input, the neural network had only screenshots that GPT-4 recognized independently, and several prompts.

As a result of image recognition, the model received a text description of what was happening in front of the player. GPT-4 then analyzed it and made decisions about what to do next; decisions were translated into commands passed to Doom.

The neural network was able to move around the game world, interact with doors and fire weapons. True, several problems arose.

Firstly, GPT-4 did not remember the context: for example, if the enemy went off the screen, the model immediately forgot about his existence. Adrian tried to solve the problem using prompts, but was unsuccessful.

Secondly, the model did not navigate the game world very well and could get stuck. Most likely, the reason is the same as for ignoring off-screen enemies: difficulty understanding your position in relation to objects.

Finally, the researcher had a hard time debugging the project. When GPT-4 was asked to explain why a particular decision was made, the explanations often contained incorrect information; in addition, the model regularly hallucinated.

However, de Winter notes, something else is interesting: without any problems, he managed to get the model to fire a weapon (albeit a virtual one), and quite accurately. This, the researcher believes, raises questions about the potentially dangerous use of such models.

This is interesting