You won't be wrong if you assume that it is also very large.

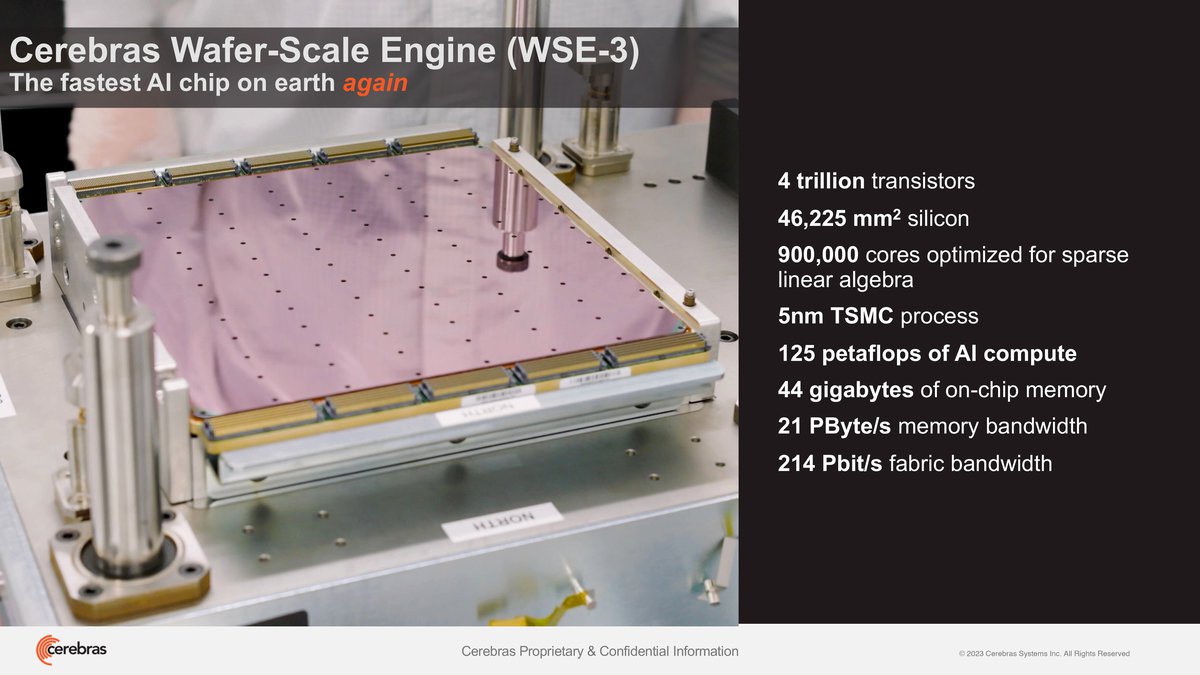

Cerebras Systems, known for producing record-breaking processor sizes, has announced a new chip: Wafer Scale Engine 3. It is designed for artificial intelligence computing.

The WSE-3 processor is manufactured using TSMC's 5nm process technology and carries 4 trillion transistors on board. The calculations are carried out by 900 thousand cores, delivering performance of 125 petaflops for AI-related calculations.

In addition to 900 thousand cores, the processor will receive 44 gigabytes of internal memory with a bandwidth of 21 petabytes per second. WSE-3 will be used in the CS-3 supercomputer, which can quickly train large language models.

The company estimates that four CS-3 systems can train a Llama model with 70 billion parameters in one day. It is noteworthy that the power consumption of the supercomputer remains the same as that of its previous generation.

Cerebras Systems notes that their development is noticeably more efficient than the DGX H100 mini-supercomputer from Nvidia. In particular, the CS-3 is approximately 8 times faster than the DGX H100 when training language models. Additionally, CS-3 requires far less code to train language models than Nvidia solutions.

There is no information on the cost of the CS-3 yet. It is known that on the basis of CS-3 it is planned to build a Condor Galaxy 3 cluster, consisting of 64 such systems.

This is interesting