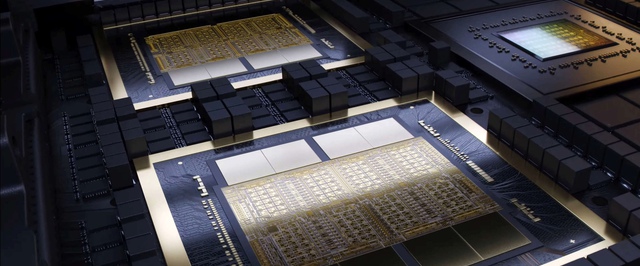

The chip carries 208 billion transistors on board.

Nvidia Corporation held a presentation of Blackwell architecture designed to work with AI. The Blackwell B200 chip will be the successor to the Hopper H100 chip used in Nvidia's current AI accelerators.

According to the company, Blackwell is much faster than Hopper. A chip with 208 billion transistors produces 20 petaflops when working with fp8 numbers and 40 petaflops when working with fp4 numbers – this is 2-5 times better than Hopper's results.

Blackwell chips will be installed in GB200 systems, combining two B200s and one Grace processor. Nvidia estimates that 2,000 Blackwell chips can train a model with 1.8 trillion parameters using 4 megawatts of power; previously this required 8,000 Hopper chips and 15 megawatts of power.

Along with the new chip, Nvidia introduced a network switch designed specifically to optimize distributed AI computing. The switch provides throughput of 1.8 TB/sec.

In addition to conventional accelerators, Nvidia will offer customers entire server racks with Blackwell chips. For example, the GB200 NVL72 combines 36 Grace processors and 72 Blackwell chips into a system with a performance of 720 petaflops.

The most productive solution will apparently be the DGX Superpod, which combines eight racks and has a total performance of 11.5 exaflops when working with fp4.

There is no information yet on the cost of Blackwell-based solutions.

This is interesting